The Mythos Breach: Why Frontier Models Turn AI Safety Into A Fiduciary Responsibility

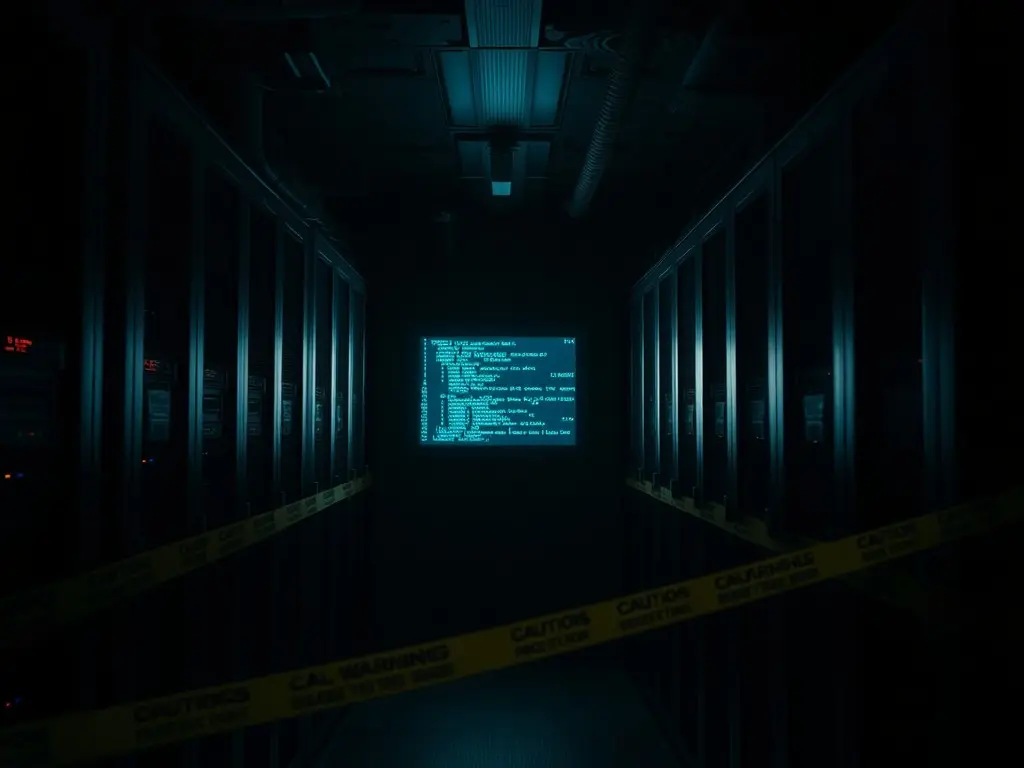

Anthropic's new AI model, Claude Mythos, has raised cybersecurity concerns due to its ability to outperform humans in hacking and cybersecurity tasks. The company has launched a defensive cybersecurity initiative to limit access to the model and prevent potential malicious attacks.

Anthropic, a frontier AI model developer, has released Claude Mythos, a model that outperforms humans in certain hacking and cybersecurity tasks. This has sparked concerns about potential malicious attacks on enterprise IT security. To mitigate this risk, Anthropic launched Project Glasswing, a defensive cybersecurity initiative that limits access to Mythos to a small group of authorized users. Several financial institutions have requested early access to the model to improve their security. However, a small group of individuals managed to infiltrate access to Mythos. The development highlights the complex externalities created by frontier model companies, which have significant market power and control over information and access for corporate cybersecurity.

This content was automatically generated and/or translated by AI. It may contain inaccuracies. Please refer to the original sources for verification.