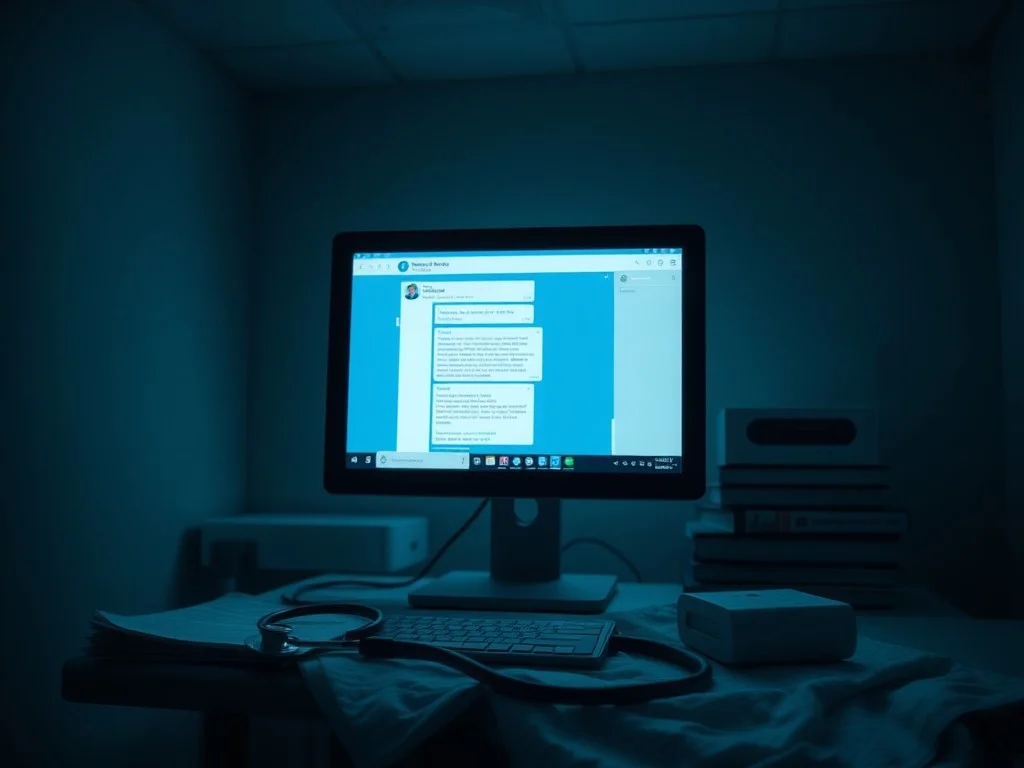

Are AI Chatbots Like ChatGPT, Gemini Giving You Wrong Diagnoses? Here's The Truth

A new report highlights the limitations of AI chatbots in diagnosing medical conditions, finding that they may provide incorrect advice without sufficient clinical data. The study tested several AI models, including those from Anthropic and OpenAI, and found that they struggled with incomplete or vague symptoms, but showed high accuracy when given detailed information.

Researchers tested AI models using clinical vignettes and found that they struggled with initial medical reasoning. The AI models performed better when given sufficient clinical data. The study evaluated LLMs from Anthropic, OpenAI, xAI, and DeepSeek. The findings note a major limitation of AI usage in healthcare, where AI may be confident even when it lacks complete reasoning. Major AI developers acknowledged the risk, and some claimed that their systems are designed to redirect users to professionals. The study's results highlight the importance of using AI in conjunction with human medical professionals.

This content was automatically generated and/or translated by AI. It may contain inaccuracies. Please refer to the original sources for verification.