Tech is turning increasingly to religion in a quest to create ethical AI

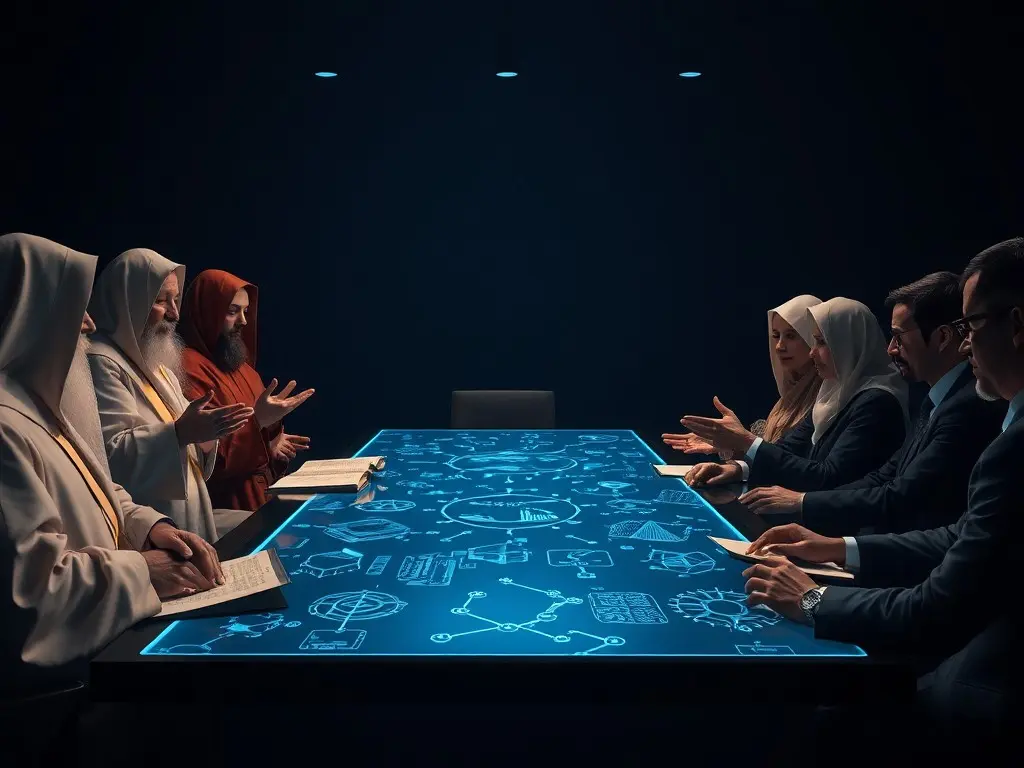

Tech companies like Anthropic and OpenAI held the inaugural Faith-AI Covenant roundtable in New York to collaborate with faith leaders on ethical AI principles, marking a shift in Silicon Valley’s approach. The initiative, organized by the Interfaith Alliance for Safer Communities, aims to develop global norms for AI ethics, though challenges remain due to differing religious values and the contested definition of 'moral AI.'

Tech companies including Anthropic and OpenAI met with faith leaders last week in New York for the first Faith-AI Covenant roundtable, organized by the Geneva-based Interfaith Alliance for Safer Communities. The event brought together representatives from groups like the Hindu Temple Society of North America, the Baha’i International Community, and the Church of Jesus Christ of Latter-day Saints to discuss ethical guidelines for AI development. The goal is to create a set of global principles informed by diverse religious perspectives, though differences in values may complicate the process. The initiative reflects a growing effort to address concerns about AI’s rapid integration, with tech executives recognizing their responsibility to ensure moral alignment. Baroness Joanna Shields, a former Google and Facebook executive, emphasized the need for collaboration, stating that regulation cannot keep pace with AI advancements. She argued that faith leaders, with their expertise in moral guidance, should play a key role in shaping the technology’s ethical framework. Some religious groups have already issued their own AI guidelines. The Church of Jesus Christ of Latter-day Saints approved AI as a tool for learning while cautioning against replacing divine inspiration. Meanwhile, the Southern Baptist Convention passed a 2023 resolution urging proactive engagement with AI to mitigate future challenges. Challenges remain, however, as Rabbi Diana Gerson noted that religious communities prioritize different ethical concerns. Anthropic has been particularly active in courting faith leaders, even incorporating their input into its public 'Claude Constitution' for its chatbot, which aims to align the AI’s behavior with deeply ethical human judgment. The partnership highlights a broader trend of faith-tech collaboration, though questions persist about whether 'moral AI' is achievable and how it should be defined. Future roundtables are planned in Beijing, Nairobi, and Abu Dhabi to expand the dialogue globally.

This content was automatically generated and/or translated by AI. It may contain inaccuracies. Please refer to the original sources for verification.