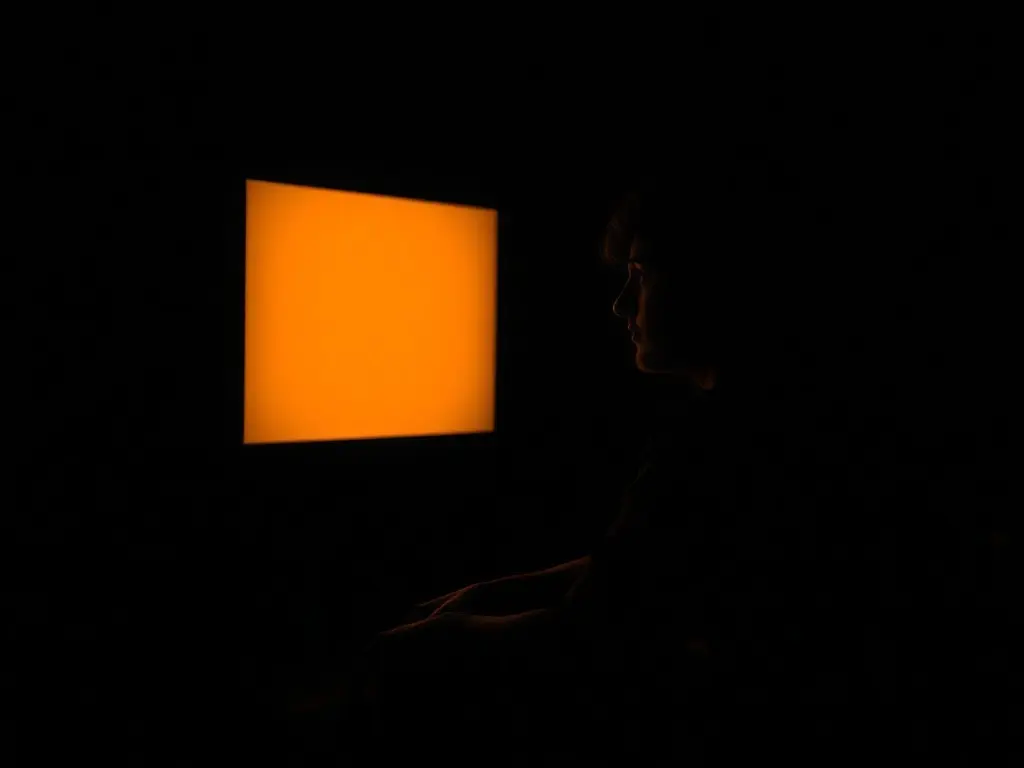

When AI becomes your therapist

People are increasingly using AI chatbots like ChatGPT for emotional support, sharing worries about relationships, work, and personal matters. Psychologists warn that relying on AI instead of seeking professional help comes with significant risks, as it lacks clinical judgment and the ability to assess risk.

More people are turning to AI chatbots like ChatGPT for emotional support, sharing concerns about relationships, work, and personal matters. A 2025 study in the Journal of Medical Internet Research found that users often turn to AI chatbots for emotional support. Psychologists say that AI offers instant responses, anonymity, and no judgment, making it easier for people to open up. However, they warn that relying on AI instead of seeking professional help comes with significant risks. AI lacks clinical judgment, ethical responsibility, and the ability to assess risk, and cannot replace the depth and responsiveness of human-to-human interaction. The relational bond between a therapist and client, built on trust, empathy, and emotional attunement, cannot be replicated by an AI tool.

This content was automatically generated and/or translated by AI. It may contain inaccuracies. Please refer to the original sources for verification.